Sound of silver

Us and them, over and over again

Nothing new under the sun

In 1986, Fred Brooks identified two kinds of software problem. Essential complexity is inherent to the thing you’re building; it can’t be simplified away without changing what the thing is. Accidental complexity is everything we pile on top: the tooling, the process, the ceremony we invented to manage the work. Brooks argued that most of the dramatic productivity gains in software had come from removing accidental complexity, and that the essential difficulties would resist any silver bullet.

Forty years on, the distinction holds.

When a director of engineering stares at a roadmap that stopped reflecting reality around week three, her instinct is to fix planning. When a product leader watches teams deliver on time while customer behaviour remains stubbornly unchanged, his instinct is to standardise the product process. When a CTO adopts OKRs and still can’t explain why everything feels stuck, her instinct is to demand more reporting.

These are all attacks on accidental complexity. A better framework here, a new cadence there, a colourful dashboard that shows red, yellow or green against arbitrary targets. Each responds to a question. None address the essential problem, which is that the systems being measured are driven by invisible norms, unclear interdependencies and human relationships.

Stacks all the way down

Software engineers already have a mental model for this kind of layered interdependence. The tech stack is one of the first things a developer learns: a set of layers, each serving a distinct purpose, each affecting the behaviour of the layers above and below it. You don’t debug a slow service by rewriting the frontend. You trace the issue through the layers until you find the causes.

The same structural logic appears elsewhere. The modern data stack breaks data infrastructure into modular layers: ingestion, storage, transformation, analytics. Each layer has a clear responsibility. When something breaks, you know where to look.

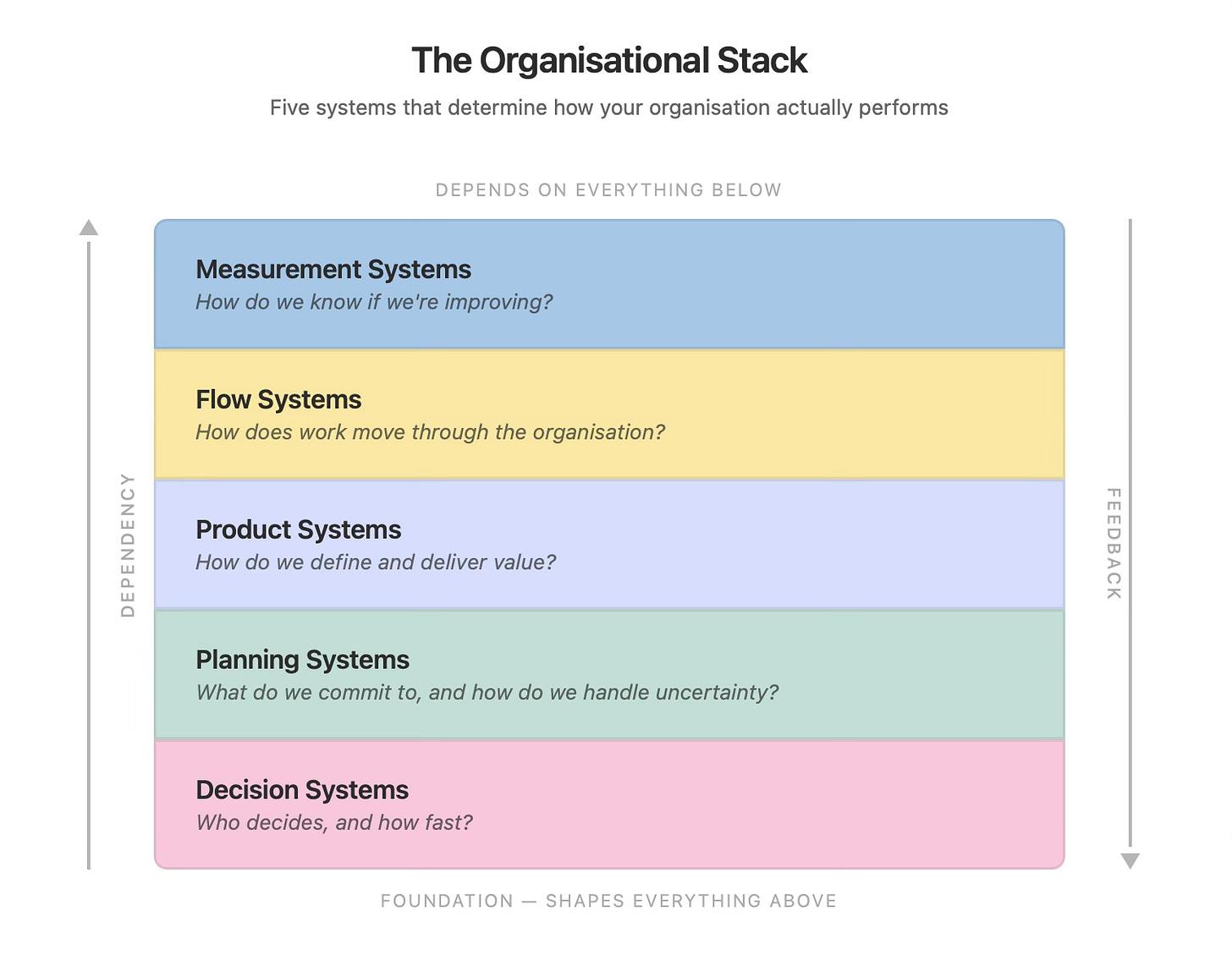

I had that same instinct when examining the organisation itself. There’s a lot of agreement about the things generally covered in Octoshark newsletters. If the way that organisations should work isn’t controversial, why don’t they work that way? Tracing the causes, I uncovered five organisational layers, stacked on top of each other, each one shaping the behaviour of the next. These are independent of the organisation chart. This is the structural logic of the organisation, laid out in a way that engineers already understand, and that explains why easy fixes tend to fail.

The five systems

Every software organisation runs on these five systems. They’re rarely documented, and mostly embedded in culture and habit rather than policy. But they’re there, and they determine how the organisation actually runs.

Decision Systems

Who decides, and how fast?

This is the foundation of the stack. Every other system sits on top of how decisions get made. In some organisations, a product manager can commit to a direction after a conversation with her team and a check against the strategic objectives. In others, the same decision requires three meetings, a slide deck, and sign-off from someone two levels up who will forget the context by Thursday.

Decision systems aren’t about whether decisions are good or bad. They’re about latency and clarity. How long does it take from “we think we should do this” to “we’re doing this”? Who has the authority? Is that authority real, or does it evaporate the moment a senior leader has a different opinion?

The decision system is often unclear to newcomers. People expect organisations to make rational decisions, but that’s rarely the case. The decision system often has its own language, and its own politics, which are only understood (if at all) by the initiated. Decision systems shape everything above them. If your decisions are slow, unclear, or concentrated in too few hands, every other system will be impacted by that, and those impacts will manifest as dysfunction at higher layers.

Planning Systems

What do we commit to, and how do we handle uncertainty?

Planning sits directly above decisions. The quality of your planning is constrained by the quality of the decision system underneath it. If decisions take weeks, plans calcify before anyone can act on them, or worse, endless effort goes into plans [while reality gets on with other ideas](https://www.octoshark.net/p/eternal-sunshine-of-the-spotless). As a result, plans become wish lists that nobody owns.

Most planning dysfunction comes from treating uncertainty as a problem to be eliminated rather than a condition to be managed. This manifests as quarterly or annual roadmaps with fixed scope, fixed dates, and no mechanism for learning. When reality diverges from the plan (and it always does), the response is either to ignore the divergence or to panic.

The PandA framework addresses this directly: structuring work across a cone of uncertainty, from the possible to the promised, with built-in appraisal of outcomes. But flexible planning frameworks can only function if the decision system authorises teams to make and own the choices the framework demands. A planning system is constrained by the decision system underneath it. This is why ‘fixing planning’ sounds seductively simple, but usually results in more process to little effect.

Product Systems

How do we define and deliver value?

This is where leadership tends to think the problem lives. Product teams aren’t delivering the right things. The roadmap doesn’t connect to strategy. Teams are shipping but no needles are moving.

Product systems are about the practices, rituals, and habits that determine how a team discovers what to build, validates whether it’s worth building, and measures whether it worked. Outcomes over outputs is the aspiration; product systems are the machinery that either makes that aspiration real or ensures that outputs are rewarded.

A team can have the right instincts about product thinking and still fail if the planning system commits them to fixed deliverables before they’ve had time to discover what matters. Or if the decision system means every pivot requires re-approval from a steering committee that meets monthly.

Flow Systems

How does work move through the organisation?

Flow is about the mechanics of delivery: how work moves from idea to production, where it gets stuck, what creates friction. Value stream mapping, bottleneck analysis, dependency management, team topologies; all of these live here.

This is the layer that most agile transformations target. Teams adopt Scrum or Kanban, measure cycle time, hold retrospectives. And sometimes things get better. But when flow improvements hit a ceiling, it’s usually because the constraint is in a layer below. Teams are waiting for decisions. Dependencies exist because the planning system didn’t account for them. Handoffs proliferate because the organisation was designed for a world where specialists sat in functional silos and work was thrown over walls.

Improving flow without addressing the systems beneath it is like optimising a database query when the real bottleneck is network latency. You’ll see small gains. You won’t solve the problem.

Measurement Systems

How do we know if we’re improving?

Measurement sits at the top of the stack because it depends on everything below. What you measure is shaped by what your product system values. How you act on measurement is shaped by your decision system. Whether measurement leads to learning or to blame is shaped by the culture that runs through every layer.

DORA metrics, SPACE, DevEx, NPS, OKR achievement rates; there’s no shortage of things to measure. The question is whether the measurement feeds back into the system in a way that produces change. In healthy organisations, measurement creates a feedback loop: we thought X would happen, Y happened instead, here’s what we’re going to do differently. In unhealthy ones, measurement is a reporting exercise. Numbers go up to leadership. Nothing comes back down.

A measurement system that exists in isolation, disconnected from the planning system, the product system, and the flow of work, is performance theatre. It creates the appearance of rigour without producing any learning.

The stack in action

An engineering director is in a performance review. Her teams are delivering regularly. Cycle times are reasonable. But the business isn’t growing, and her VP is frustrated, and tells her so. Her instinct is to look at what teams are building (the product system) or how fast they’re building it (the flow system).

She starts there. She introduces outcome-based planning. Teams target outcomes based on customer behaviour. They measure results. As a proposal, it looks great.

Six months later, nothing has changed. Teams still can’t act on what they learn because the quarterly planning cycle has already committed them to the next set of deliverables. The planning system overrides the product system. And the planning system is rigid because the decision system above it requires executive sign-off on any scope change, which takes three weeks and a business case.

The symptom was in the product layer. The cause was in the decision layer. The stack makes that visible.

Or take a different organisation. This one has invested heavily in flow: mature Kanban practices, solid CI/CD, team topologies designed to minimise dependencies. Delivery is smooth. But the teams are delivering the wrong things. Measurement shows activity but not impact. Nobody is asking whether the work matters, only whether it shipped.

The flow system is working perfectly. The measurement system is tracking the wrong signals because the product system never defined what success looks like beyond delivery. And the product system is output-focused because the planning system rewards teams for clearing their backlog, not for achieving outcomes.

Every layer affects every other layer. You can’t fix one in isolation.

Reading your own stack

The value of the stack metaphor isn’t in the framework itself. It’s in what it lets you see. And seeing it is harder than it sounds.

Most organisations have a reasonable understanding of their individual systems in isolation. They know their planning process. They know their delivery cadence. They have metrics dashboards. But because they’re living inside the ecosystem, they can’t see how the layers interact, where one system’s design creates dysfunction in another, and where an intervention at the wrong layer will be absorbed by the system without producing change.

There are good reasons for that blindness. The person who owns the decision system is usually senior enough that questioning it feels like questioning them. The planning system was designed by people who have since moved on; it persists because nobody remembers why it works this way, only that it does. Flow systems are often the product of years of accumulated workarounds, each one a rational response to a constraint that may no longer exist. And measurement systems are political: what you choose to measure signals what you value, and changing that signal threatens whoever built their credibility on the old numbers.

Self-diagnosis requires looking at layers you benefit from not examining. That’s why it rarely happens from inside the system. It’s also why, when it does happen, the instinct is to start with the layer that’s easiest to change rather than the one that matters most.

Sometimes that instinct is the right one. Fixing accidental complexity first; a better standup cadence, a cleaner backlog, a dashboard that actually tracks outcomes; can build credibility and momentum, even when it’s not addressing the essential problem. The danger is in stopping there and mistaking the improvement for the solution.

The organisational stack gives you a diagnostic lens for going further. When something isn’t working, trace it through the layers. If teams can’t pivot when they learn something new, is that a product system problem or a planning system problem? If planning is rigid, is that because the decision system requires certainty? If measurement isn’t driving learning, is that because the metrics are wrong or because nobody acts on what the metrics reveal?

The answers will point you to the layer where the real work needs to happen. It’s rarely where you first thought.

Watch the tapes

Why don’t organisations work the way we want them to? Because they’re essentially complex ecosystems. Making the layers coherent with each other is hard. It’s not easy to structure the informal organisation so that decisions flow at a pace that planning can use, where planning leaves room for product thinking, product thinking shapes what gets measured, and measurement feeds back into decisions.

That coherence is rare. It’s rare because nobody looks at the whole stack. They look at the layer that’s causing pain today, attack the accidental complexity, and wonder why the essential problem remains.

Brooks was right. There is no silver bullet. But there is a diagnostic, and it starts with seeing the stack that was always there.