AmplificAItion

Every building requires foundations

“Get the AI to do it,“ is both the most exciting and most frustrating sentence in product development today.

There’s possibly no limit to what we can get “the AI” to do. The promise of AI is extraordinary. We are in the foothills of a revolution that will drive changes in how everything works. The organisations that harness AI well will accelerate away from those who aren’t able to leverage it.

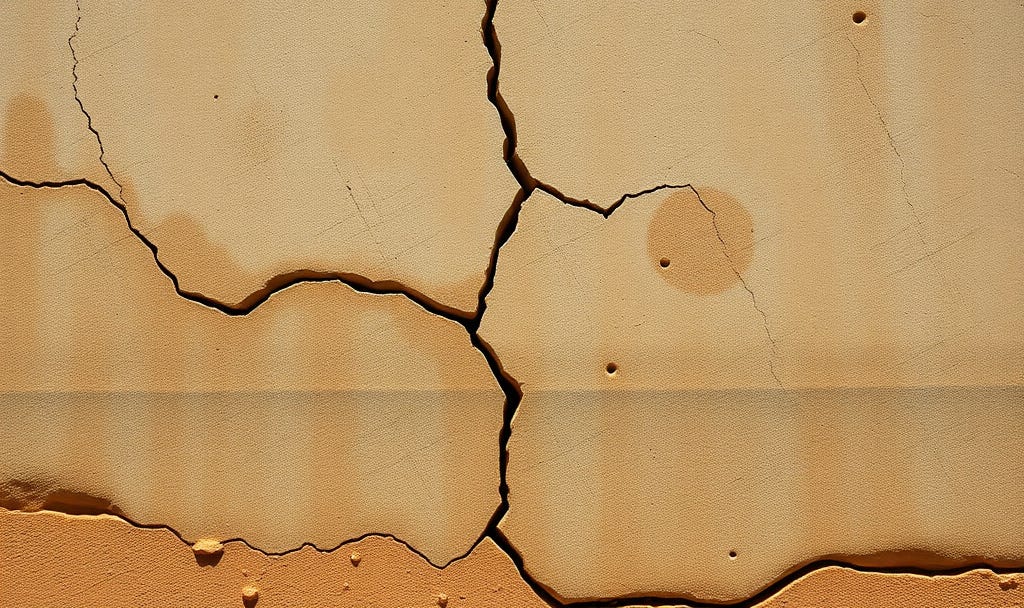

But there is a gap between the promise and the reality, and it is not a technology gap. It is a crack in the foundations that prevents us building an AI-driven future.

Unintended consequences

Over the past two decades, organisations moved fast and broke things. They prioritised delivery over documentation. Knowledge lived in people’s heads rather than being systematically available, particularly when things were fixed or a new feature was added to an existing application. Tribal knowledge replaced written understandability.

This wasn’t an intentional hoarding of information. The choice to prioritise the next-most-important-thing over documentation was always rational. Each of these decisions made sense at the time. None of them were malicious. There was pressure to get the next customer, build the next marketable moment. The people who understood the system were still in the building. There was no stress about documentation. Why write it down when you could walk over and ask? Why worry about documenting something you might pivot away from?

Nobody anticipated that one day, in order to keep up, you’d need to be able to feed documentation back to the machines.

LLMs require well-documented systems so they can generate reasonable insights. AI needs something to reason with. The cumulative effect of years of underinvestment in documentation, data quality, and engineering practice has created an environment where AI often has remarkably little to work with. This is particularly impactful in organisations that have been around for over a decade that don’t operate in highly-regulated industries. The organisations most desperate for AI to transform them are often the least able to make the leap. There’s nothing for the AI to leverage. Or worse, the documentation that does exist is outdated and contradictory. Garbage in, garbage out.

The amplifier

AI does not fix problems. It amplifies whatever it finds.

Give an LLM clean, well-structured data and coherent documentation and it will do remarkable things. It will surface patterns humans would miss. It will generate code that respects the architecture. It will accelerate onboarding, speed up incident response, make sense of complex systems. It will do what the pitch decks promise.

Give it a codebase nobody fully understands, documentation that was last updated three years ago, and knowledge that lives in four people’s heads, and it will confidently amplify the confusion. It will generate plausible nonsense. It will produce code that looks right but ignores dependencies that were never written down. It will automate the wrong things faster than you ever could manually.

If there’s not enough information for a human to understand, there’s not enough information for the machine to explain.

An amplifier is only as good as the signal it receives.

The gap

There’s lots of data being published about how AI is failing to make the leap, and that most AI initiatives fail. From a certain vantage point, this looks like a discernible pattern.

An organisation decides it needs an AI strategy. An executive steps up. A team is formed. Tools are procured. A pilot project is selected. And then, the pilot stalls. Not because the AI doesn’t work, but because the team discovers that the data is incomplete, required documentation is missing, the system boundaries are unclear, and nobody can explain how the thing they want to improve actually functions.

The AI holds up a mirror, and the organisation doesn’t like what it sees.

This is the moment where many organisations either retreat (”the technology isn’t ready yet”) or push through with brute force, throwing people at the problem of cleaning up decades of technical and knowledge debt in a matter of weeks. Neither approach works.

In order to succeed, we need to acknowledge that the foundations need work. The model that disregards documentation and written understanding has run its course. We’re moving into a new world of well-documented systems, and that this work has value far beyond generating better AI outcomes. In a particularly nice irony, it’s possible to use AI to generate this documentation.

Clean documentation helps humans too. Well-structured data supports better decisions with or without a model. Clear system boundaries make teams more effective regardless of whether an LLM is in the loop.

The opportunity

For years, the benefits of investing in documentation, data quality, and engineering practice have been undervalued. It’s always been the right thing to do. The value of risk reduction has always been well-understood, but it has struggled to compete with the next feature on the roadmap.

AI changes the calculus. The investment in good practice now has an urgent, concrete business case. The organisations that have clean data will be able to deploy AI effectively. This is why we’ve seen regulated industries report great efficiencies with AI. The organisations that have well-documented systems will be able to onboard AI tools that actually understand what they’re working with. The organisations that invested in the boring fundamentals are about to accelerate in a way unavailable to those that didn’t.

This is not a small advantage. It compounds. An organisation with good foundations deploys AI effectively, which generates better data, which improves the AI, which accelerates the organisation further. An organisation with poor foundations struggles to deploy AI at scale, falls further behind, and finds the gap widening with every quarter.

Good practices have always been the right investment. AI has made them an urgent one. The organisations that treated documentation as a luxury and tribal knowledge as acceptable are about to discover the cost of those decisions. Not because they were wrong at the time, but because the world changed around them.

If you want the AI to do it, you need to give it something to work with.